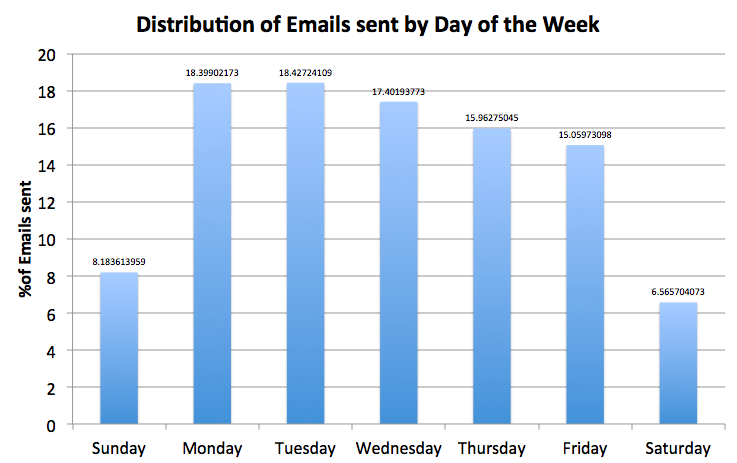

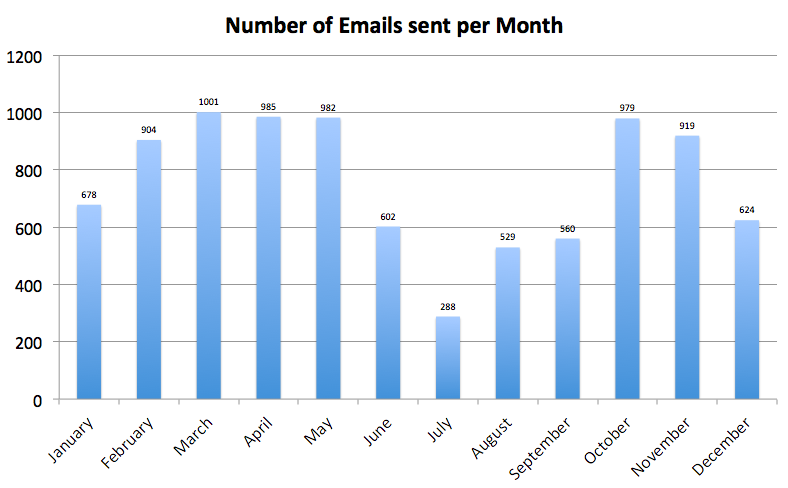

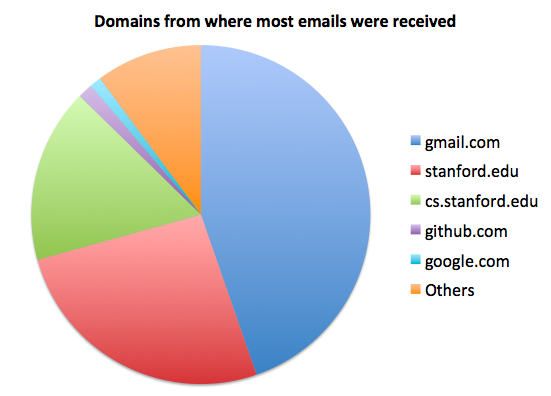

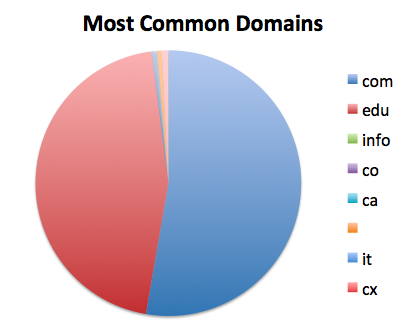

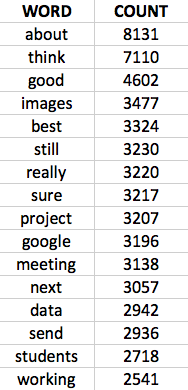

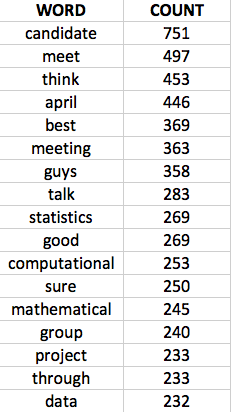

IntroductionI have realized that every morning, I follow a very strict ritual. The very first thing I do every morning is groggily checking my email. Unfortunately, I have around 15-25 emails to sort through as soon as I wake up. my alarm generally rings around 8:30am. I like to think that's a really early time to wake up, especially for a student. Why do people wake up so early and send out emails? Anyway, throughout the course of the day I end up checking my email at least around 10 times. Since I dedicate to so much time to this task, I thought it was about time I did some analysis and figured out what going on behind my gmail account. What could I discover? (If you use Hotmail/Outlook or Yahoo Mail, please stop reading this now and never speak to me again. :p) Analysis Over the WeekAs expected, I spend most of my emails on the weekdays. Also, it looks like there is a steady decrease in emails over the course of the week. So, if you really need to reach me, looks like your best bet is emailing me on Mondays. Analysis Over the YearEven the number os emails I send over the year appear to be exactly the way I predicted it would look like. There is a huge dip in the summer when I was working for Google and riveted to using my corporate email account instead of my gmail. Also, seems like there was a dip over the winter (December and January) when I went back home to visit my family. February seems a little less busy but it's a short month anyway. Most Common DomainsAppears like I have a knack for emailing gmail accounts more than any other account. This seems logical since I coordinate with a lot of people at work through my Google Drive. Stanford comes at a close second while computer science stanford account third on my list. Random Domain AnalysisNothing really interesting here. I send most of my emails to edu and com accounts. Most Common CorrespondencesThis part is a little interesting. It appears that the person I send most of my emails to is MYSELF. I have sent about 2400 emails back to mysql. Don't worry, I don't have a split personality. This makes perfect sense because I usually send myself emails as a way to transfer documents or short segments of texts between my various laptops. it's hard having a work machine, a travel laptop and a home laptop. I should honestly just set up remote login and transfer documents that way. The second person I spent the most emails to is my research project partner (1369 emails). Meanwhile, both my co-advisors at Stanford came in at third and forth place with around 520 emails each. That's more than an email EVERY DAY. I hope they aren't sick of me bugging them so often. Also makes me wonder how many emails they have to deal with if every one of their advisees is sending them an email a day on average. Well, at least I am not wasting time with my emails. It seems like most of it related to my research. Every other person has received significantly few emails from me over the past year. Word FrequenciesFinally, I decided it would be fun to see what kind of things my emails usually contain. After filtering out common words like my name, and other obvious words, I calculated the most frequent words that I have used. Also, because my birthday is in April, I computed the frequencies for the month of April as a comparison. I guess I am NOT wasting time on my emails after all. Overall, it looks like I really like talking about "good" "images" and plan lot of "meeting"s. You know you are working when "working" is one of your most frequent words. Also, in April, it looks like I was doing a lot of "computational" "mathematics". I don't even remember at this point why those words occurs over 200 times each. Also looks like I really wanted everyone to know it was "april" since I used it 446 times. ConclusionIt's quite clear that by analyzing my emails, Google and obviously the NSA know basically everything they need to about me. They can assume with a high probability that I am a stanford student who lives in California and that I am probably studying or am really interested in computer science and specifically interested in "good" "images". Yup, I guess it's obvious that I am a Computer Vision Researcher.

1 Comment

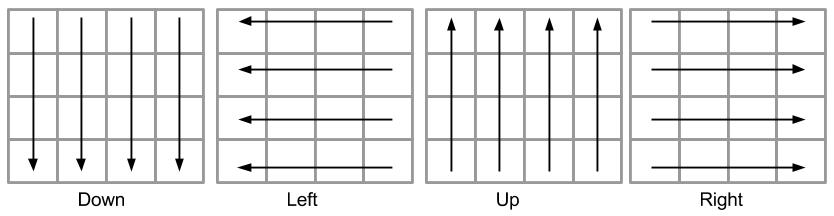

Motivation Sometime ago, just like everyone else, I managed to get addicted to the 2048 game. Whenever, I wanted to take a break from work, I would just open up a new tab and begin the repetitious arrow smashing. Watching the tiles fly by was just as much fun as actually trying to mine for that prized 2048 tile. Unfortunately, the game wouldn't just complete in minutes and I saw myself consistently spending more time on this game than I should. From an academic perspective, I thought it would be interesting to build an artificial intelligence (AI) to play the game for me. I reasoned that if I could get something to play for me, then I invariably have solved the game and would no longer be inclined to play it anymore and waste my time. I was definitely wrong. Now that I have an AI playing the game for me, I tend to waste time not playing the game but by simply sitting there and watching the tiles move about, waiting for notable errors in my heuristic. Artificial Intelligence Explanation On every turn, the artificial intelligence is essentially making all 4 moves: UP, DOWN, LEFT, RIGHT and evaluating which one would be the best decision based on which one brings it closer to winning. Since the game is randomized and at every step a random block is generated, the AI algorithm also generated all possible tiles in the available empty tiles with values of 2 and 4. It then continues to make moves and attempts to account for all possible random tile generations. Because the AI is able to consider all possible future moves, shouldn't it always be able to win?Unfortunately, because considering all those cases grows exponentially and our browsers have limited CPU bandwidth, the algorithm above only looks 6 moves into the future. Check out the Time Calculations section for an analysis of the algorithm's speed. So after looking 6 moves into the future, it uses a heuristic to estimate the value of the moves that has made. After evaluating all possible moves, it chooses the one that achieves the highest estimated value according to the heuristic. Artificial Intelligence Algorithm My two main requirements for the AI was to always win the game (achieve the 2048 tile) and to be fast enough to be rendered seamlessly using client side javascript. The algorithm I used is expectimax. It follows the following recurrence relationship: where state encodes the current game state with the location and values of all the tiles. Utility(state) is described in the Heuristics section below. PLAYER is an identifier indicating that it is the player's turn to move. After every turn, the BOARD generates a random tile. Prob(state, tile) is the probability of a given tile with a specific location and value showing up on given state described by state. For a given location the probability of a tile with a value of 2 occurring is 0.9 while a value of 4 occurring is 0.1. Successor(state, a) returns the state of the game after applying action a on the state. Finally, GenerateTIle() adds the tile to the state and returns the new state of the game. Heuristics I used three separate heuristics to evaluate the utility of a given game state. Empty Tiles The first heuristic was rather simple. It main main was to maximize the number of empty tiles on the board. However, every turn, the board generates a new tile. The intuition behind this heuristic was that the only way to move towards a board with more empty tiles is by merging blocks together. Merging blocks aligned perfectly with the main objective of the game and the heuristic performed rather well. Monotonicity The next heuristic attempted to maximize monotonicity on the grid. In other words, in the 4 directions, up, down, left and right, the heuristic attempted to arrange the numbers in decreasing order along one of those 4 directions. Gradients The final heuristic assigned a varying weight to each of the different tiles in the board. Tiles in the corner were assigned a high weight to optimize for either one of the four corners. The heuristic pushed the highest valued tiles to one of the corners. This also increased the surface area of smaller valued tiles to occur next to the newly generated tiles, allowing easy merging without much reordering. Results The results for the three heuristics are shown in the table here. The gradient outperforms the other heuristics and is the one that is used in the AI above.

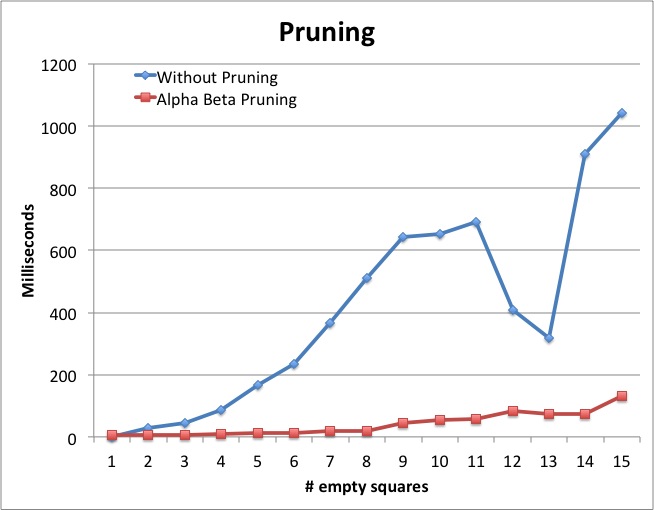

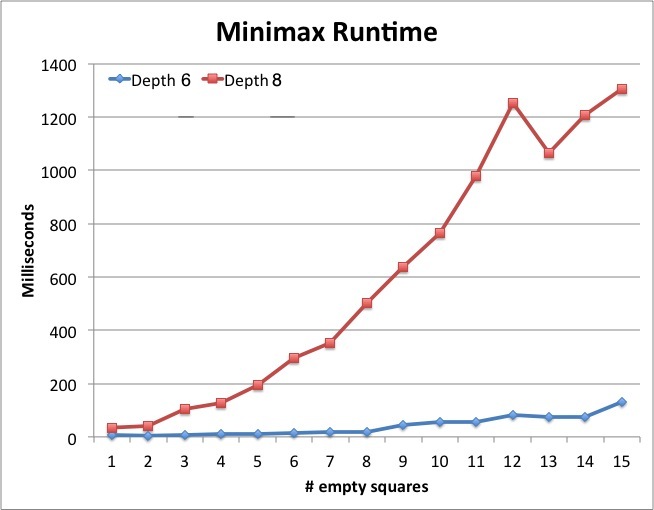

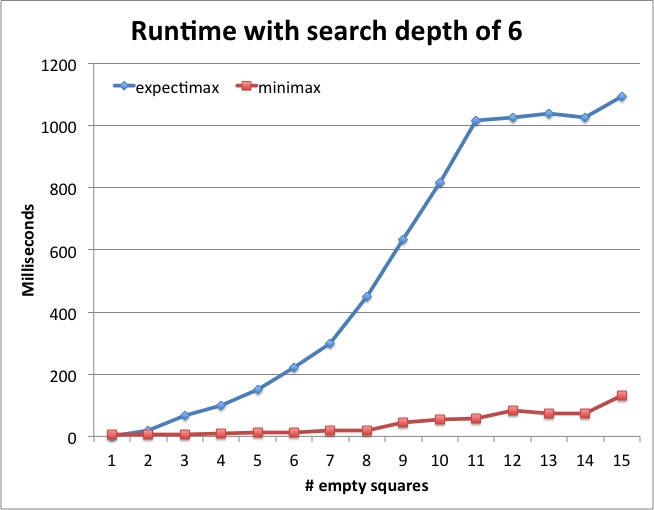

Comparisons to Minimax I also implemented a Minimax algorithm and the source for that can also be found in the repository. Unlike the expectimax algorithm, minimax optimizes for the worse case generation of tiles. It assumes that the board is not randomly generating tiles but is doing so to prevent the user from winning. The recurrence relationship that my minimax follows is below: where the methods are the same as the ones described above for expectimax. Unfortunately, the assumption minimax makes performs a lot worse than expectimax. In an attempt to prevent a losing scenario, it fails to take risks and merge the medium sized tiles of value 8 or 16, essentially causing the algorithm to lose more often than it should. However, if this algorithm wasn't being optimized for rendering the animation, and was implemented on a server, it would be able to search to a higher depth, allowing the algorithm to perform a lot better. Time Calculations One of the biggest bottle necks of this algorithm is not being able to render the animation for the game and still have an efficient AI play the game using client side javascript. To that end, I conducted the following benchmarks for run time of the AI algorithms I implemented.

The current algorithm used above uses expectimax with a depth of 6 that varies if the number of empty tile is higher than a given threshold. It assumes that each move takes approximately 450ms to complete along with the animations in order to generate a smooth viewing of the AI in action. Main Takeaway I had a lot of fun implementing this. There are tons of improvements and benchmarks to run but I don't have the time to spend on this. Hope you enjoy this game as much as I have and like watching the AI "do it's thing".

Having an office in Times Square implies that you will encounter numerous Elmos outside your office. I can only imagine the look on all the kid's faces when they see multiple Elmos surrounding them. Times Square also provides an easy way to travel to any part of the city considering that most subway lines intersect there. Additionally, traveling out of the city is just as seamless with Port Authority a block away. The office space itself provides yoga and bootcamp classes to keep their employees guilt free from the free corporate lunches. Showers are also built into the office space to supplement these classes or even bicycling to work. Finally, the clutch that steals the deal for me are the table tennis and pool tables that make any taking breaks at work both social as well entertaining. The ProductFor me, coming out of college, impact was the one real goal I had set. MongoDB, Inc.'s main product is its namesake, MongoDB, a non-relational, JSON-styled document store. A product that is not only free to license but also open source. The product is currently used by over 600 customers and numerous other adoptees. The downloads are increasing every single month. Impact? Checked. MongoDB is not a perfect product. And it has not been around for too long. One of the most refreshing parts about working at MongoDB is how realistic the engineers are about the product. In my past internships (names politically omitted), the employees were all aroused to a false sense of security that their product was far superior with limited or not faults. At MongoDB, during my first week, I had multiple candid conversations about the problems that the database possesses and the timeline and steps that need to taken to fix them. It felt mature of a company to accept its flaws. The lack of arrogance about the product also arises from their trust in their ability to remedy the problems and the knowledge that even with these issues, MongoDB still manages to solve innumerable product-based and developer-oriented barriers. Even with it's current flaw, the product has accomplished a lot. At the end, no product is perfect, every engineering solution is just an iteration. And in the end, I would rather work on a product with interesting HARD challenges than one with mundane tasks. But MongoDB is more than a database, it's an entire egosystem with drivers in various languages, with both server side and client side tools, with web based security and backup managements systems and clients with robust demands. I can explain these parts in more detail by talking about the work I have done in the past few months. The Work I have worked at Mongo for about 6 months in now. In the past 6 months worked on over 10 different projects (granted they were of different sizes and scopes). It's liberating to be able to push code on my first day of work at MongoDB. It really exemplifies the urgency in startups. Internal Tools My first project was to create a dynamically generating searchable structure for all the employees in the company. As a Systems Engineer, it was both challenging and refreshing to learn front end development and use frameworks like d3.js, Backbone, Marionette etc. Working for the Internal Tools team gave me access to a ton of valuable information about both the company as well as the clients. I was able to understand how the different clients interacted with MongoDB as well as learning about how the entire company was structured and what projects were in progress. During my time in internal tools, I also had a chance to work on image processing while using Mongo's GridFS file storage system. I launched all my projects into production during a span of just three weeks. Quality Assurance The next group of projects I tackled were all QA related. I wrote automated tests and spent numerous weeks hacking away at the next release of MongoDB 2.6, set to launched in 2014. It was exciting to write performance benchmarks for Mongo's logging system, the redact aggregation framework as well as security features like SSL and Kerberos. MongoDB Management Service I guess here is where I can go into more detail about how MongoDB is more than just a database company. MongoDB Management Server, MMS, is a web based engineering solution to managing deploying large scale MongoDB products. It allows customers to Monitor their database visually to understand the types of queries being performed, allowing their engineers to analyze the bottlenecks in their application and optimize appropriately. MMS also enables Automation, the ability to launch as well as terminate instances of their databases in a sharded (distributed) system. Finally, MMS also enables the ability Backup customer's databases to the cloud to prevent data loss from server crashes. For the MMS team, I worked on incorporating/modifying multiple third party applications including Hipchat and Marketo, designing different parts of the application. But my core project involved working with the security team to enable two factor authentication using Google Authenticator. For countries like India, where there is a large customer base but restrictions on the use of SMS for two factor authentication, Google Authenticator was a perfect solution to encourage more clients to start using MMS. It was incredibly fun redesigning the authentication architecture with a TOTP algorithm and ensuring that I take into interesting corner cases where SMS and Authenticator clash with each other. MongoDB Support I spent some time working with the Support team at MongoDB as well. It was juxtaposition of annoyance and gratification dealing with customer's problems on sites like Jira, StackOverflow and Google Groups. It gave me a chance to explore bugs in the database as well as MongoDB user's own source code, giving me the opportunity to understand the various exquisite variances with which developers use MongoDB. Kernel Team The Kernel team is where all the hardcore C++ engineers reside. Along with Friday Whiskeys and a while range of issues to tackle, it was exhilarating to be in the mix. I worked on the C++ driver for the server with the main intention of preventing MongoDB's symbols from clashing with System Boost symbols in all the most popular operating systems including Mac OS, multiple different Linus machines as well as Windows (why do people still develop on Windows?). The PeopleSurprisingly, the best part about my job was my colleagues. Everyone from VP's to new hires were always willing to talk and introduce themselves with the intention of getting to know you personally and not just discuss your work. Every single question, no matter how relevant would always be answered. People were always just looking to help each other out. From Mentor lunches to New Grad events to company happy hours to pool tables conquests, I managed to interact with a large portion of the company, both engineers as well as Sales and Human Resources. One of the key parts of MongoDB that stood out was the Skunkworks program, similar to Google's 20% time where engineers huddle together to come up and implement new innovative ideas. Breaking away from our work to enjoy ideation and collaboration fostered a fun way to meet new people, form new teams and work on random yet useful projects. The CruxI wrote thousands of lines of code in each of the following languages: Javascript, Python, Java and C++. it's a pity I missed out on working in Go. Overall, I worked on systems structures, frontend fiascos, backend bashes and security stresses while learning to take the lead on my projects and gaining invaluable communication skills.

If I had been told that I would have learnt so much in such a short period of time, I would probably have laughed. But looking back I can't help but acknowledge a feeling of loss of not continuing to work at a place where you can push yourself to the point where you are working on multiple teams and multiple projects at the same time. MongoDB is more than a job, it's a playground for innovators and a storm-house of products. Have I made the impact I wanted to make while at Mongo? I can't honestly answer that question. But I do know that thousands of people are now viewing and dealing with the code that I wrote and living with some of the decisions that I had the chance to make. My experience here has been invaluable.

About a few weeks ago, I was walking to my office when saw a gigantic white balloon being blown up right outside my office building. Intrigued, I began investing and discovered Project Loon. Brief History Recently, Google announced its newest endeavor: Project Loon. It hopes to enhance the web's influence by providing internet to everyone in the world through a series of hot air balloons with attached routers. When Google’s chairman, Eric Schimdt announced in April that "For every person online, there are two who are not. By the end of the decade, everyone on Earth will be connected,” no one would have guessed that this bold statement would be carried out by Google X, the division in Google in charge of Project Loon. Over the past few years, Google X has released a number of incredible projects, including Google Glass, Self Driving Cars, as well as other projects related to neural networks. Google X is Google’s secret research and development lab that is headed by Sergey Brim himself. The division is rumored to house hundreds of projects related to futuristic technologies, which until now, we have only witnessed in movies and our wildest imaginations. About ten years ago, no one would have predicted that smart phones would become such an integral part of how we lead our lives or that the internet would facilitate such a strong influence in educational transparency and cultural integration across multiple continents. An entire genre of jobs have erupted on the web over the last decade, many of which are based solely around spreading knowledge. YouTube, for example, has thousands of “how to” videos. With internet becoming available to millions of web secluded people, there will be a drastic surge of these videos. Imagine how Wikipedia, Facebook, Twitter and other crowd sources websites would look after 4.5 billion more people go online and begin contributing.  Background Information The balloons that project loon will use will be inflated with Helium and will use solar energy to operate. They are supposed to be operated through a vertically motioned user interface. Operators will be able to maneuver the balloons around the globe by using winds currents since air currents flow in opposite directions separated by a given vertical distance. Hence, by controlling the balloons altitude, theoretically, it is possible to move the balloons to their rightful location. The problem with this motor control is the inability of these balloons to stay in one place for long enough. However, this issue can be overcome by eventually having enough balloons in the sky to ensure that there is a balloon covering every position on the globe. These balloons will not influence flight patterns of airplanes as they will be higher up than the airspace in which airplanes fly. Each balloon will be able to cover approximately 1200 square kilometers. With multiple balloons in one location, the signal strength could also be improved. This allows the internet connection to be optimized as denser populated areas like cities will need more balloons whereas rural area will naturally require fewer balloons.  Currently each balloon can only stay active for a few weeks but this problem will hopefully be remedied within the next few months. At this very moment, there are more than a couple of dozen balloons floating around New Zealand, where this project is being beta tested. Benefits for Google Initially, when the project was announced, it appeared as if Google is spending millions of dollars on a project providing internet to billions of people without any form of payment in return. So, how will Google make any profit from this project? Considering that Google is an advertisement company, increasing the volume of internet users would invariably increase traffic on the world’s leading search engine, Google Search. This accrual in search users implies that more ads will be displayed, swelling Google’s pockets even deeper. Also, considering that today’s internet marketing model requires purchasers to manually install a router in their houses, Loon will already have a distinct dominance. Adopting Loon as your internet connection would require no external hardware, decreasing barriers that users would have to deal with when deciding whether or not to get an internet connection. Google’s competitors that also provide similar services like Time Warner Cable will automatically have a reduced market share. Also Space Systems would also be at a disadvantage since their satellites cost more to maintain by multiple levels of magnitude than the Loon balloons do. Pros for the World Project Loon is clearly an asset to Google. However, it is also a convenience to the rest of the world. One of the most obvious avails of the project is the Availability of Information. Assuming all the mechanisms of the project are functioning as planned, every single person who has access to some device that has wifi access would be able to search for almost any form of media online. Farmers in remote corners of third world countries would be able to research and analyze multiple techniques that could increase their yield, a father would be able to stay in touch with his daughter no matter which township either one of them lived in, villagers across an country would be able to transparently examine the countries political scenario and vote appropriately. The second benefit is naturally Education. With millions of uneducated children all across the world, this program might be able to successfully provide schooling through online classes on topics ranging anywhere from disaster management to literary analysis. Even without any additional content, these new users would at the very least have access to existing online resources including W3School, CodeAcademy and many others. Health and Medicine is another area that will be affected by Loon. With globally available data on disease outbreaks and medical breakthrough, the entire population will be able to adjust to epidemics or adopt new drugs or medications. Loon’s Use of Renewable Energy will greatly influence and inspire future projects as well. Creating an interplay between solar energy to keep the balloon functional while using wind energy to define its motor controls will help reduce the burden on coal, petroleum and other non-renewable energy sources. Finally, Collaboration between people across the globe will become much easier with the constant connectivity to the each other through the internet, allowing newer more complicated projects to arise. For example, NGO’s in Africa could clearly demonstrate to their investors from Canada precisely how they are implementing their communal goals. Concerns The main problem with launching any hardware project is the certainty of eventual hardware failure. In most cases, the hardware is usually accessible and can be fixed. However, for airplanes, rockets, satellites, and now Loon balloons, hardware failure is a huge problem as they can not be reached. If a Loon balloon fails, it can either remain up in the air floating, making it difficult to bring down or it might go down in unwanted areas. Both of these scenarios are a huge concern to the stability as well as the safety of people whose lives might be affected by unwanted balloon landings. Another concern over this project is internet privacy since it gives Google more power over a wider range of consumer behavior. This information can become a security issue if it is shared with Government agencies like the NSA.

In conclusion, Project Loon is an ambitious project and the world will highly benefit from it. But do the pros outweigh the cons?

References: 1. Eric Schimdt: http://www.businessinsider.com/eric-schmidts-bold-internet-prediction-2013-4 2. Google Glass: http://www.google.com/glass/start/ 3. Self Driving Cars: http://www.youtube.com/watch?feature=player_embedded&v=cdgQpa1pUUE 4. Neural Network: http://www.eetimes.com/document.asp?doc_id=1266579 5. Sergey Brim: https://en.wikipedia.org/wiki/Sergey_Brin

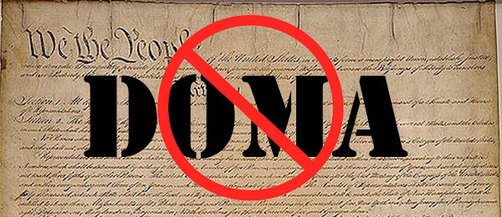

I intend to mainly focus on writing about recent technological inventions, projects and research papers and its impact on today’s society. I aim to make these posts as informative as I can to others as well. Along with rapid technological development, we are also at an era where political reforms are revolutionizing our society. Only about a week ago, the Defense of Marriage Act was abolished just in time for the gay pride parade in San Francisco. This is an exciting time with an extraordinary wealth of changes all around us. In my future blogs, I will spend more time discussing individual issues that I find intriguing. Stay Tuned.

|

RSS Feed

RSS Feed